AI ON THE RISE

There are probably not many people who would doubt that we have arrived in an age of Big Data and Artificial Intelligence (AI). Of course, this opens up many previously untapped opportunities, ranging from production to automated driving and everyday applications. In fact, not only digital assistants such as Google Home, Siri or Alexa, but also many web services and apps, smartphones and home appliances, cleaning robots and even toys already use AI or at least some kind of machine learning.

Technology visionaries such as Ray Kurzweil predicted that AI would have the power of an insect brain in 2000, the power of a mouse brain around 2010, human-like brainpower around 2020 and the power of all human brains on Earth before the middle of this century. Many do not share this extremely techno-optimistic view, but it cannot be denied that IBM’s Deep Blue computer beat the Chess genius Kasparov back in 1997, IBM’s Watson computer won the knowledge game Jeopardy back in 1997, and Google’s AlphaGo system beat the 18-time world champion Lee Sedol in the highly complex strategy game “Go” in 2016 – about 10 to 20 years before many experts had expected this to happen. When AlphaZero managed to outperform AlphaGo without human training, just by playing Go a lot of times against itself, the German news journal “Spiegel” wrote on October 29, 2017: “Gott braucht keine Lehrmeister”[1] [“God does not need a teacher.”].

AI as God?

This was around the time when Anthony Levandovski, a former head of Google’s self-driving car project, founded a religion that worships an AI God.[2] By that time, many considered Google to be almost all-knowing. With the Google Loon project, they were also working on omni-presence. Omnipotence was still a bit of a challenge, but as the world learned by the end of 2015, it was possible to manipulate people’s attention, opinions, emotions, decisions, and behaviors with personalized information.[3] In a sense, our brains had been hacked. However, only in summer 2017 did a previous member of a Google control room, Tristan Harris, reveal in his TED talk, “How a handful of tech companies control billions of minds every day”.[4] At the same time, Google was trying to build superintelligent systems and to become something like an emperor over life and death, namely with its Calico project.[5]

Singularity

So, was Google about to give birth to a digital God – or had done so already?[6] Those believing in the “singularity”[7] and AI as “our final invention”[8] already saw the days of humans counted. “Humans, who are limited by slow biological evolution, couldn’t compete and would be superseded,” said the world-famous physicist Stephen Hawking.[9] Elon Musk warned: “We should be very careful about artificial intelligence. If I had to guess at what our biggest existential threat is, it’s probably that.”[10] Bill Gates stated he was “in the camp that is concerned about super intelligence”.[11] And Apple co-founder Steve Wozniak asked: “Will we be the gods? Will we be the family pets? Or will we be ants that get stepped on?”[12] AI pioneer Jürgen Schmidhuber wants to be the father of the first superintelligent robot and appears to believe that we would be like cats, shortly after the singularity arrives.[13] Sofia, the female humanoid robot, who is now counted as a citizen of Saudi Arabia, seems to see things similarly (but maybe that was a joke for promotional purposes).[14]

Therefore, it is time to ask the question, what will happen with humans and humanity after the singularity? There are different views on this. Schmidhuber claims superintelligent robots will be as little interested in humans as we are in ants, but this does, of course, not mean we would not be in a competition for material resources with them. Others believe the next wave of automation will make millions or even billions unemployed. Combined with the world’s expected sustainability crisis (i.e. predicted shortage of certain resources), this is not good news. Billions of humans might (have to) die early, if the predictions of the Club of Rome’s “Limits to Growth” and other studies were right.[15] This makes the heated debate on ethical dilemmas, algorithms deciding about life and death, about killer robots and autonomous weapons[16] understandable[17] (see discussion below). Thus, will we face something like a “digital holocaust”, where autonomous systems decide about our life based on a citizen score or some other approach? Not necessarily so. Others believe that, in the “Second Machine Age”,[18] humans will experience a period of prosperity, i.e. they would enter some kind of technologically enabled “paradise”, where we would finally have time for friends, hobbies, culture and nature rather than being exploited for and exhausted by work. But even those optimists have often issued warnings that societies would need a new framework in the age of AI, such as a universal basic income.[19] However, it seems we have not made much progress towards a new societal contract – be it with basic income or without.

Transhumanism

Opinions are not only divided about the future of humanity, but also on the future of humans. Some, like Elon Musk, believe that humans would have to upgrade themselves with implanted chips in order to stay competitive with AI (and actually merge with it).[20] In perspective, we would become cyborgs and replace organs (degraded by aging, handicap, or disease) by technological solutions. Over time, a bigger and bigger part of our body would be technologically upgraded, and it would be increasingly impossible to tell humans and machines apart.[21] Eventually, some people believe, it might even become possible to upload the memories of humans into a computer cloud and thereby allow humans to live there forever, potentially connected to several robot bodies in various places.[22]

Others think we would genetically modify humans and upgrade them biologically to stay competitive.[23] Genetic manipulation might also extend life spans considerably (at least for those who can afford it). However, in a so-called “over-populated world” this would increase the existential pressure on others, which brings us back to the life and death decisions mentioned before and, actually, to the highly problematic subject of eugenics.[24]

Overall, it appears to many technology visionaries that humans as we know them today would not continue to exist much longer. All those arguments, however, are based on the extrapolation of technological trends of the past into the future, while we may also experience unexpected developments, for example, something like “networked thinking” – or even an ability to perceive the world beyond our own body, thanks to the increasingly networked nature of our world (where “links” are expected to become more important than the system components they connect, thereby also changing our perception of our world).

Is AI really intelligent?

The above expectations may also be wrong for another reason: perhaps the extrapolations are based on wrong assumptions. Are humans and robots comparable at all, or did we fall prey to our hopes and expectations, to our definitions and interpretations, to our approximations and imitations? Computers process information, while humans think and feel. But is it the actually same? Or do we compare apples and oranges?

Are todays robots and AI systems really autonomous to the degree humans are autonomous? I would say “no”, at least the systems that are publicly known still depend a lot on human maintenance and external resources provided by humans.

Are today’s AI systems capable of emotions? I would say “no”. Changing color when gently touching the head of a robot, as “Pepper” does, has nothing to do with emotions. Being able smile or look surprised or angry, or being able to read our mimics is also not the same as “feeling” emotions. And sex robots, I would say, are neither able to feel love nor to love a human or another living being. They can just “make love”, i.e. make sex and talk as if they would have emotions. But this is not the same as having emotions, e.g. feeling pain.

Are today’s AI systems creative? I would say “hmmm”. Yes, we know AI systems that can mix cocktails and can generate music that sounds similar to Bach or any other composer, or create “paintings” that look similar to a particular artist’s body of work. However, these creations are, in a sense, variations of a lot of inputs that have been fed into the system before. Without these inputs, I do not expect those systems to be creative by themselves. Did we really see some AI-created piece of art that blew our minds, something entirely new, not seen or heard before? I am not sure.

Are today’s AI systems conscious? I would say “no”. We do not even know exactly what consciousness is and how it works. Some people think consciousness emerges when many neurons interact, in a similar way as there are waves, if many water molecules interact in an environment exposed to wind. Other people, among them some physicists, think that consciousness is related to perception – a measurement process rooted in quantum physics. If this were the case, as Schroedinger’s famous hypothetical “cat experiment” suggests, reality would be created by consciousness, not the other way round. Then, the “brain” would be something like a projector producing our perceived reality.

So far, we do not even know whether AI systems understand texts they process. When they generate text or translations, they are typically combining existing elements of a massive database of human-generated texts. Without this massive database of texts produced by humans, AI-based text and translations would probably not sound like human language at all. It is kind of obvious that our brain works in a very different way. While we don’t have nearly as many texts stored in our brain, we can nevertheless speak fluently. Suggesting that we approximately understand the brain because we can now build deep learning neural networks that communicate with humans seems to be misleading.

Are today’s AI systems intelligent at least? I would say “no”. The systems that are publicly known are “weak” AI systems, that are very powerful in particular tasks and often super-human in specific aspects. However, so far, “strong” AI systems that can flexibly adjust to all sorts of environments and tasks as humans can do, are not publicly known. To my knowledge, we also don’t have AI systems that have invented anything like the physical laws of electrodynamics, quantum mechanics, etc.

In conclusion, I would say that today, even humanoid robots are not like humans. They are a simulation, an emulation, an imitation, or approximation, but it is hard to tell how similar they really are. Recent reports even suggest that humans are often considerably involved in generating “AI” services – and it would be more appropriate to speak of “pseudo-AI”.[25] I would not be surprised at all, if our attempts to build human-like beings in Silico would finally make us aware of how different humans really are, and what makes us special. In fact, this is the lesson I expect to be learned from technological progress. What is consciousness? What is love? We might get a better understanding of such questions, of who we really are and our role in the universe, if we can’t just build it the way we have tried it so far.

What Is Consciousness?

I sometimes speculate the world might be an interpretation of higher-dimensional data (as elementary particle physics actually suggests). Then, there could be different kinds of interpretations, i.e. different ways to perceive the world, depending on how we learn to perceive it. Imagine the brain to work like a filter – and think of Plato’s allegory of the cave,[26] where people just see two-dimensional shadows of a three-dimensional world and, hence, would find very different “natural laws” governing what they see. What if our world was not three-dimensional, but we would see only a three-dimensional projection of a higher-dimensional world? Then, one day, we may start seeing the world in an entirely different way,[27] for example, from the perspective of quantum logic rather than binary logic. For such a transition in consciousness to take place, we would probably have to learn to interpret weaker signals than what our five senses send to our brain. Then, the permanent distractions by the attention economy would just be the opposite of what would be needed to advance humanity.

Let me make this thought model a bit more plausible: Have you ever wondered why Egyptian and ancient paintings used to look flat, i.e. two-dimensional, for hundreds of years, while suddenly three-dimensionally looking perspective was invented and became the new standard? What if ancient people have really seen the world “with different eyes”? And what if this would happen again? Remember that the invention of photography was not the end of paintings. Instead, this invention freed arts from naturalistic representation, and entirely new painting styles were invented, such as impressionistic and expressionistic ones. Hence, will the creation of humanoid robots finally free us from our mechanistic, materialistic view of the world, as we learn how different we really are?

So, if we are not just “biological robots”, other fields besides science, engineering, and logic become important, such as psychology, sociology, history, philosophy, the humanities, ethics, and maybe even religion. Trying to reinvent society and humanity without the proper consideration of such knowledge could easily end in major mistakes and tragedies or crises of historical proportions.

CAN WE TRUST IT?

Big Data Analytics

In an article of the “Wired” magazine 2008, Chris Anderson claimed that we would soon see the end of theory, and the data deluge would make the scientific method obsolete.[28] If one just had enough data, certain people started to believe, data quantity could be turned into data quality, and, therefore, Big Data would be able to reveal the truth by itself. In the past few years, however, this paradigm has been seriously questioned. As data volume increases much faster than processing power, there is the phenomenon of “dark data”, which will never be processed and, hence, it will take scientists to decide which data should be processed and how.[29] In fact, it is not trivial at all to distill raw data into useful information, knowledge and wisdom. In the following, I will describe some of the problems related to the question “how to connect the dots”.

It is frequently assumed that more data and more model parameters are better to get an accurate picture of the world. However, it often happens that people “can’t see the forest for the trees”. “Overfitting”, where one happens to fit models to random fluctuations or otherwise meaningless data, can easily happen. “Sensitivity”, where outcomes of data analyses change significantly, when some data points are added or subtracted, or another algorithm or computer hardware is used, is another problem. A third problem are errors of first and second kind, i.e. “false positives” (“false alarms”) and cases, where alarms should go off, but fail to do so. A typical example is “predictive policing”, where false positives are overwhelming (often above 99 percent), and dozens of people are needed to clean the suspect lists.[30] And these are by far not all the problems …

Correlation vs. Causality

In Big Data, it is easy to find patterns and correlations. But what do they actually mean? Say, one finds a correlation between two variables A and B. Then, does A cause B or B cause A? Or is there a third factor C, which causes A and B? For example, consider the correlation between the number of ice-cream-eating children and the number of forest fires. Forbidding children to eat ice cream will obviously not reduce the number of forest fires at all – despite the strong correlation. It is obviously a third factor, outside heat, which causes both, increased ice cream consumption and forest fires.

Finally, there may be no causal relationship between A and B at all. The bigger a data set, the more patterns will be contained just by coincidence, and this could be wrongly interpreted as meaningful or, as some people would say, as a signal rather than noise.[31] In fact, spurious patterns and correlations are quite frequent.[32]

Nevertheless, it is, of course, possible to run a society based on correlations. The application of predictive policing may be seen as example. However, the question is, whether this would really serve society well. I don’t think so. Correlations are frequent, while causal relationships are not. Therefore, using correlations as basis of certain kinds of actions is quite restrictive. It unnecessarily restrains our freedom.

Trustable AI

There has been the dream that Big Data is the “new oil” and Artificial Intelligence something like a “digital motor” running on it. So, if it is difficult for humans to make sense of Big Data, AI might be able to handle it “better than us”. Would AI be able to automate Big Data analytics? The answer is, partly.

In recent years, it was discovered that AI systems would often discriminate against women, non-white people, or minorities. This is because they are typically trained with data of the past. That is problematic, since learning from the past may stabilize a system we should actually better replace by something else, given that today’s system is not at all sustainable.

Lack of explanation is another important issue. For example, you may get into the situation that your application for a loan or life insurance is turned down, but nobody can explain you why. The salespeople would just be able to tell you that their AI system has recommended them to do so. The reason may be that two of your neighbors had difficulties paying back their loans. But this is again messing up correlations and causal relationships. Why should you suffer from this? Hence, experts have recently pushed for explainable results under labels such as “trustable AI”. So far, however, one may say we are still living in a “blackbox society”.[33]

Profiling, Targeting, and Digital Twins

Since the revelations of Edward Snowden, we know that we have all been targets of mass surveillance, and based on our personal data, “profiles” of us have been created – no matter whether you wanted this or not.[34] In some cases, such profiles have not only been data bases or unstructured data about us. Instead, “digital doubles”, i.e. computer agents emulating us, have been created of all people in the world (as much as this was possible). You may imagine this like a black box that has been created for everyone, which is continuously fed with surveillance data and learns to behave like the humans they are imitating. Such platforms may be used to simulate countries or even the entire world. One of these systems is known under the name “Sentient World”.[35] It contains highly detailed profiles of individuals. Services such as “Crystal Knows” may give you an idea.[36] Detailed personal information can be used to personalize information and to manipulate our attention, opinions, emotions, decisions and behaviors.[37] Such “targeting” has been used for “neuromarketing”[38] and also to manipulate elections.[39] Furthermore, it plays a major role for today’s information wars and the current fake news epidemics.[40]

Data Protection?

In seems, the EU General Data Protection Regulation (GDPR) should have protected us from mass surveillance, profiling and targeting. In fact, the European Court of Human Rights has ruled that past mass surveillance as performed by the GCHQ was unlawful.[41] However, it seems that lawmakers have come up with new reasons for surveillance such as being the friend of a friend of a friend of a suspected criminal, where riding a train without a ticket might be enough to consider someone a criminal or suspect.

Moreover, it is now basically impossible to use the Internet without agreeing to Terms of Use beforehand, which typically forces you to agree with the collection of personal data, even if you don’t like it – otherwise you will not get any service. The personal data collected by companies, however, will often be aggregated by secret services, as Edward Snowden’s revelations about the NSA have shown. In other words, it seems that the GDPR, which claims to protect us from unwanted collection and use of personal data, has actually enabled it. Consequently, there are huge amounts of data about everyone, which can be used to create digital doubles.

Are our personal profiles reliable at least? How similar to us are our digital twins really? Some skepticism is in place. We actually don’t know exactly how well the learning algorithms, which are fed with our personal data, converge. Social networks often have features similar to power laws. As a result, the convergence of learning algorithms may not be guaranteed. Moreover, when measurements are noisy (which is typically the case), chances that digital twins behave identical to us are not very high. This does, of course, not necessarily exclude that averages or distributions of behaviors may be rather accurately predicted (but there is no guarantee).

Scoring, Citizen Scores, Superscores

The approach of “scoring” goes a step further. It assesses people based on personal (e.g. surveillance) data and attributes a certain economic or societal value to them. People would be treated according to their score. Their lives would be “curated”. Only people with a high enough score would get access to certain products or services, others would not even see them on their digital devices at all, or they would see a downgraded offer. Personalized prizing is just one example for the personalization of our digital world.

According to my assessment, scoring is not compatible with human rights, particularly human dignity (see below).[42] However, you can imagine that there are currently quite a lot of scores about you. Each company working with personal data may have several of them.[43] You may have a consumer score, a health score, an environmental footprint, a social media score, a Tinder score, and many more. These scores may then be used to create a superscore, by aggregating different scores into an index.[44] In other words, individuals would be represented by one number, which is often referred to as a “Citizen Score”.[45] This practice has first been revealed as “Karma Police Program”[46] on September 25, 2015, the day on which Pope Francis demanded the UN Agenda 2030, a set of 17 Sustainable Development Goals, in front of the UN General Assembly.[47] China is currently testing the Citizen Score approach under the name “Social Credit Score”.[48] The program may be seen as an attempt to make citizens obedient to the government’s wishes. This has been criticized as data dictatorship[49] or technological totalitarianism.[50]

A Citizen Score establishes, in principle, a Big-Data-driven neo-feudalistic order.[51] Those with a high score will get access to good offers, products, and services, and basically have anything they desire. Those with a low score may be stripped off their human rights and may be deprived from certain opportunities. This may include such things as travel visa to other countries, job opportunities, the allowance to use a plane or fast train, Internet speed, or certain kinds of medical treatment. In other words, high-score citizens will live kind of “in heaven”, while a large number of low-score citizens may experience something like “hell on Earth”. It’s something like a digital judgment day scenario, where you get negative points, when you cross a red pedestrian traffic light, if you read critical political news, or if you have friends who read critical political news, to give some realistic examples.

The idea behind this is to establish “total justice” (in the language of the Agenda 2030: “strong institutions”), particularly for situations when societies are faced with scarce resources and necessary restrictions. However, a Citizen Score will not do justice to people at all, as these are different by nature. All the arbitrariness of a superscore lies in the weights of the different measurements that go into the underlying index.[52] These weights will be to the advantage of some people and to the disadvantage of others.[53] A one-dimensional index will brutally oversimplify the nature and complexity of people, and tries to make everyone the same, while societies thrive on differentiation and diversity. It is clear that scoring will treat some people very badly, and in many cases without good reasons (remember my notes on predictive policing above). This would also affect societies in a negative way. Creativity and innovation (which challenge established ways of doing things) are expected to go down. This will be detrimental to changing the way our economy and society work and, thereby, obstruct the implementation of a better, sustainable system.

Automation vs. Freedom

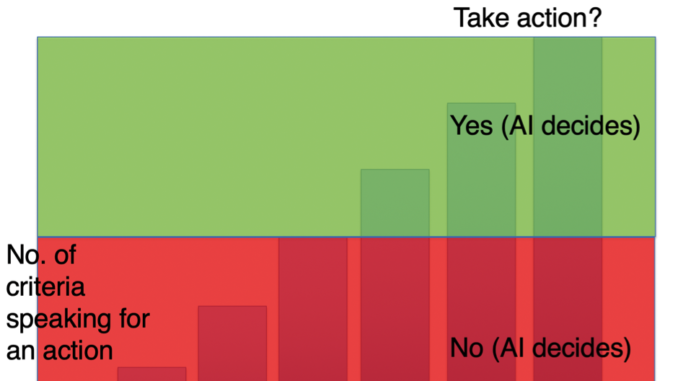

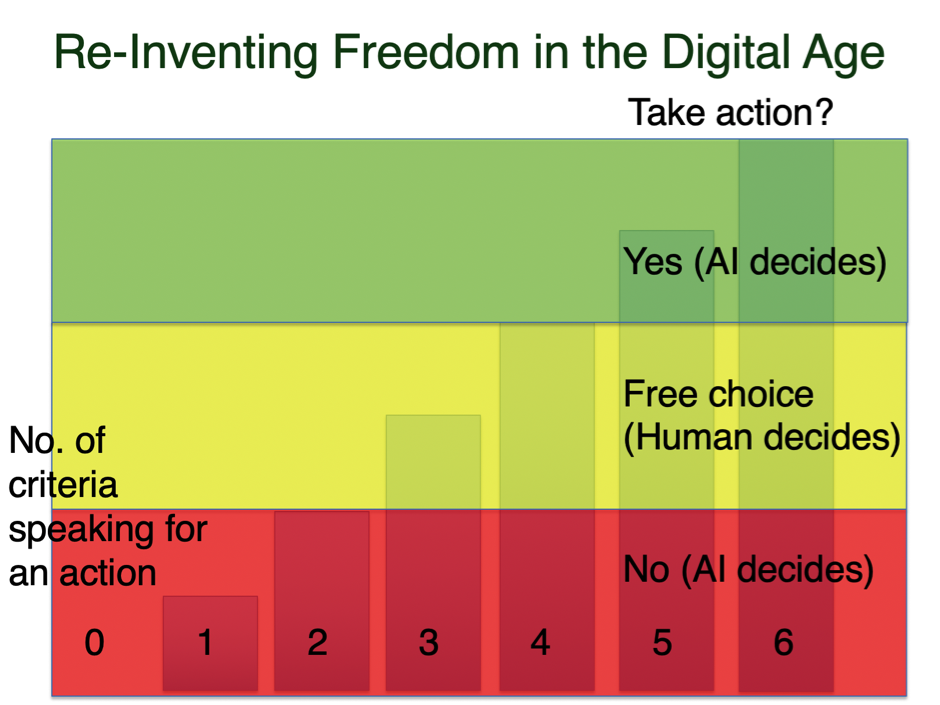

In the age of AI, there is a great temptation to automate processes of all kinds. This includes the automation of decisions. Within the framework of Smart Cities and Smart Nations concepts, there are even attempts to automate societies, i.e. to run them almost like a machine.[54] Such automation of decisions is often (either explicitly or implicitly) based on some index or one-dimensional decision function with one or several decision thresholds. Let us assume you are measuring 6 different quantities to figure out whether you should decide “yes” or “no” in a certain situation. Then, of course, you could specify that a measurement-based algorithm should decide for “no”, if between 0 and 3 measurements speak for “yes”, while it should decide for “yes”, if between 4 and 6 measurements recommend to choose “yes” (see Fig. 1). This may sound plausible, but it eliminates the human freedom of decision-making completely.

Instead, it would be more appropriate to automate only the “sure” cases (e.g. “no”, if a clear minority of measurements recommend “yes”, but “yes” if a clear majority of measurements recommend “yes”). In contrast, the “controversial” cases should prompt human deliberation (also if some additional fact comes to light that makes it necessary to revisit everything). Such a procedure is justified by the fact that there is no scientific method to fix the weights of different measurements exactly (given the problem of finite confidence intervals). Figure 2 shows the clear “yes” cases in green, the clear “no” cases in red, and the controversial cases in yellow.

The suggested semi-automated approach would reduce human decision workload by automation where it does not make sense to bother people with time-consuming decisions, while it would give people more time to decide important affairs that are not clear, but may be relevant for choosing the future that feels right. I believe this is important for a future that works well for humans, who derive happiness in particular from exercising autonomous decisions and good relationships.[55]

Learning to Die?

Indices based on multiple measurements or data sets are also being used to recommend prison sentences,[56] to advise on whether a medical operation is economic, given the overall health and age of a person, or even to make life-or-death decisions.[57] One scenario that has recently been obsessively discussed is the so-called “trolley problem”.[58] Here, an extremely rare situation is assumed, where an autonomous vehicle cannot brake quickly enough, and one person or another will die (e.g. one pedestrian or another one, or the car driver). Given that, thanks to pattern recognition and other technology, cars might now – or at least soon – distinguish between age, race, gender, social status etc., the question is whether the car should save a young healthy manager, while sacrificing an old, ill, unemployed person. Note that the consideration of such factors is not in accordance with the equality principle of democratic constitutions[59] and also not compatible with classical medical ethics.[60] Nevertheless, questions like these have been recently raised in what was framed as the “Moral Machine Experiment”,[61] and recent organ transplant practices already apply new policies considering previous lifestyle.[62]

Now, assume that we would put such data-based life-and-death situations into laws. Can you imagine what will happen if such principles originally intended to save human lives would be applied in possible future scenarios characterized by scarce resources? Then, the algorithm would turn into a killer algorithm bringing a “digital holocaust” on the way, which would sort out old and ill people, and probably also people of low social status, including certain minorities. This is the true danger of creating autonomous systems that may seriously interfere with our lives.[63] It does not take killer robots for autonomous systems to become a threat to our lives, in case the supply of resources falls short.

A revolution from above?

The above scenario is, unfortunately, not just a phantasy scenario. It has been seriously discussed by certain circles already for some time. Some books suggest we should learn to die in the age of the Anthropocene.[64] Remember that the Club of Rome’s “Limit to Growth” study suggests we will see a serious economic and population collapse in the 21st century.[65] Accordingly, billions of people would die early. I have heard similar assessments from various experts, so we should not just ignore these forecasts. Some PhD theses have even discussed AI-based euthanasia.[66] There also seems to be a connection to the eugenics agenda.[67] Sadly, it seems that the argument to “save the planet” is now increasingly being used to justify the worst violations of human rights. China, for example, has recently declined to sign a human rights declaration in a treaty with Switzerland,[68] and has even declined to receive an official German human rights delegation in China.[69] Certain political forces in other countries (such as Turkey, Japan, UK and Switzerland) have also started to propose human rights restrictions.[70] In the meantime, many cities and even some countries (such as Canada and France) have declared a state of “climate emergency”, which may eventually end with emergence laws and human rights restrictions.

Such political trends remind one of the proposed “revolution from above” that has been demanded by the Club of Rome already decades ago.[71] In the meantime, this system – based on technological totalitarianism such as mass surveillance and citizen scores – seems to be almost completed.[72] Note that the negative climate impact of the oil industry was already known about 40 years ago,[73] and the problem of limited resources as well.[74] Nevertheless, it was decided to export the non-sustainable economic model of industrialized nations to basically all areas of the world, and the energy consumption has dramatically been increased since then.[75] I would consider this reckless behavior and say that politics has clearly failed to ensure responsible and accountable business practices around the world, such that human rights now seem to be at stake.

DESIGN FOR VALUES

Human Rights

Let us now recall what was the reason to establish human rights in the first place. They actually resulted from terrible experiences such as totalitarian regimes, horrific wars, and the holocaust. The establishment of human rights as the foundation of modern civilization, reflected also by the UN’s Universal Declaration of Human Rights,[76] was an attempt to prevent the repetition of such horrors and evil. However, the promotion of materialistic consumption-driven societies by multi-national corporations led to the current (non-) sustainability crisis, which has become an existential threat, which might cause the early death of hundreds of millions, if not billions of people.

Had industrialized countries reduced their resource consumption just by 3% annually since the early 70ies, our world would be sustainable by now, and discussions about overpopulation, climate crises and ethical dilemmas would be baseless. Would we have engaged in the creation of a circular economy and would we have used alternative energy production schemes early on rather than following the wishes of big business, our planet could easily manage our world’s population. Unfortunately, the environmental movement that came up decades ago, was not very welcome in the beginning, and in some countries this is even true today. I wonder what is so bad about living in harmony with nature?

Happiness vs. Capitalism

Psychology knows that autonomy and good relationships – you could also call it “love” – are the main factors promoting happiness.[77] It is also known that (most) people have social preferences.[78] I believe, if our society would be designed and managed in a way that supports the happiness of people, it would also be more sustainable, because happy people do not consume that much. From the perspective of business and banks, of course, less consumption is a problem and, therefore, consumption is being pushed in various ways: by promoting individualism through education, which causes competition rather than cooperation between people and makes them frustrated; they will then often consume to compensate their frustration. Furthermore, using personalized methods such as “big nudging”, also known as neuromarketing, advertisements have become extremely effective in driving us into more consumption. These conditions could, of course, be changed.

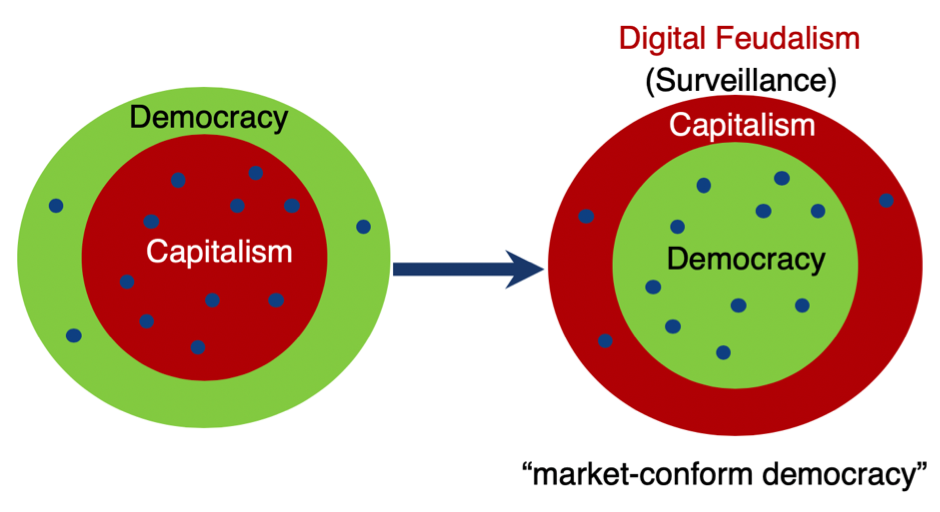

I would, therefore, say the average human is not the enemy of humanity (and nature), in contrast to what the book “The First Global Revolution” by the Club of Rome claimed.[79] It is rather the calculus of selfishness followed by companies, known as profit maximization or utilitarian thinking, often neglecting “externalities”, which has driven the world to the edge. In the framework of surveillance capitalism (see Fig. 3), this system has been further perfected. Now, human personality and society (“social capital”) have become the resources that are being exploited. People’s lives are being downloaded for free by means of mass surveillance, and our personal data are then being sold to companies that we don’t know and probably do a lot of things we would not approve of. We have become the victims of this system, and we have to pay for it by means of data and by paying for the products we buy. It is often stated, if services are free, we are the product. I have serious doubts that such a system is compatible with human dignity, and it will probably cause a lot of problems.

For such reasons, I am also critical about utilitarian ethics, which makes numerical optimization, i.e. business-like thinking, the foundation of “ethical” decision-making. Such an approach gives everyone and everything (including humans and their organs) a certain value or price and implies that it would be even justified to kill people in order to save others, as the “trolley problem” suggests. In the end, this will probably turn peaceful societies into a state of war or “unpeace”, in which only one thing counts (namely, money, or whatever is chosen as utility function – it could also be power or security, for example).

This is the main problem of the utilitarian approach. While it aims to optimize society, it will actually destroy it little by little. The reason is that a one-dimensional optimization and control approach is not suited to handle the complexity of today’s world. Societies cannot be steered like a car, where everyone moves right or left, as desired.[80] For societies to thrive, one needs to be able to steer into different directions at the same time, such as better education, improved health, reduced consumption of non-renewable energy, more sustainability, and increased happiness. This requires a multi-dimensional approach rather than one-dimensional optimization, and therefore, cities and countries are managed differently from companies (and live much longer!). The before mentioned requirement of pluralism has so far been best fulfilled by democratic forms of organization. However, a digital upgrade of democracies is certainly overdue. For this reason, it has been proposed to build “digital democracies” (or democracies x.0, where x is a natural number greater than 1).[81]

Human Dignity

It is for the above reasons that human dignity has been put first in many constitutions. Human dignity is considered to be a right that’s given to us by birth, and it is considered to be the very foundation of many societies. It is the human right that stands above everything else. Politics and other institutions must take action to protect human dignity from violations by public institutions and private actors, also abroad. Societal institutions lose their legitimacy if they do not engage effectively in this protection.[82] Improving human dignity on short, medium and long time scales should, therefore, be the main goal of political and human action. This is obviously not just about protecting or creating jobs, but it also calls for many other things such as sustainability, for example.

The question is, what is human dignity really about? It means, in particular, that humans are not supposed to be treated like animals, objects or data. They have the right to be involved in decisions and affairs concerning them, including the right of informational self-determination. Exposing people to surveillance, while not giving them the possibility to easily control the use of their personal data is in massive violation of human dignity. Moreover, human dignity implies certain kinds of freedoms that need to be protected not only in their individual interest, but also to avoid the abuse of power and to ensure the functioning and well-being of society altogether.

Of course, freedoms should be exercised with responsibility and accountability, and this is where our current economic system fails. It diffuses, reduces and dissolves responsibility, in particular with regard to the environment. So-called externalities, i.e. external (often unwanted, negative) [side] effects are not sufficiently considered in current pricing and production schemes. This is what has driven our world to the edge, as the current environmental crisis shows in many areas of the world.

Furthermore, human dignity, like consciousness, creativity and love, cannot be quantified well. That is, what makes us human is not well reflected by the Big Data accumulated about our world. Therefore, a mainly data-driven and AI-controlled world largely ignores what is particularly important for us and our well-being. This is not expected to lead to a society that serves humans well.

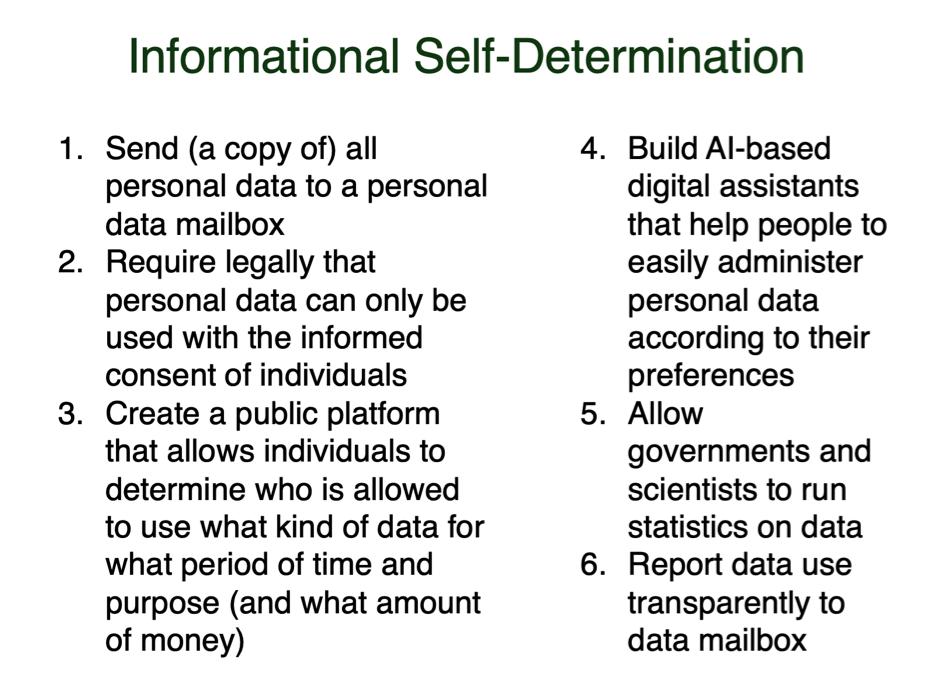

While many people are talking about human-centric AI and a human-centric society, they often mean personalized information, products and services, based on profiling and targeting. This is getting things wrong. The justification of such targeting is often to induce behavioral change towards better environmental and health conditions, but it is highly manipulative, often abused, and violates privacy and informational self-determination. In the ASSET project [EU Grant No. 688364], instead, we have shown that behavioral change (e.g. towards the consumption of more sustainable and healthy products) is possible also based on informational self-determination and on respect of privacy.[83] I do, therefore, urge public and private actors to push informational self-determination forward quickly.

Informational Self-Determination

Informational self-determination is a human right that follows directly from human dignity, and this cannot be given away under any circumstances (in particularly not by accepting certain Terms of Use). Nevertheless, in times of Big Data and AI, we have largely lost self-determination in the digital and real world little by little. This must be corrected quickly.

Figure 3 summarizes a proposed platform for informational self-determination, which would give control over our personal data and our digital doubles back to us. With this, all personalized services and products would be possible, but companies would have to convince us to share some of our data with them for a specific purpose, period of time, and perhaps price. The resulting competition for consumer trust would eventually promote a trustable digital society.

Figure 4: Main suggested features of a platform for informational self-determination.

The platform would also create a level playing field: not only big businesses, but also SMEs, spinoffs, NGOs, scientific institutions and civil society could work with the data treasure, if they would get data access approved by the people (but many people may actually select this by default). Overall, such a platform for informational self-determination would promote a thriving information ecosystem that catalyses combinatorial innovation.

Government agencies and scientific institutions would be allowed to run statistics. Even a benevolent superintelligent system that helps desirable activities (such as social and environmental projects and the production of public goods) to succeed more easily while not interfering with the free will of people would be possible. Such a system should be designed for values such as human dignity, sustainability, fairness, as well as further constitutional and cultural values that support the evolvement of creativity and human potential with societal and global benefits in mind.

Data management would be done by means of a personalised AI system running on our own devices, i.e. digital assistants that learn our privacy preferences and the companies and institutions we trust or don’t trust. Our digital assistants would comfortably preconfigure personal data access, and we could always adapt it.

Over time, if implemented well, such an approach would establish a thriving, trustable digital age that empowers people, companies and governments alike, while making quick progress towards a sustainable and peaceful world.[84]

Design for Values

As our current world is challenged by various existential threats, innovation seems to be more important than ever. But how to guide innovation in a way that creates large societal benefits while keeping undesirable side effects small? Regulation often does not appear to work well. It is often quite restrictive and typically comes late, which is problematic for businesses and society alike.

However, it is clear that we need responsible innovation.[85] This requires pro-actively addressing relevant constitutional and ethical, social and cultural values already in the design phase of new technologies, products, services, spaces, systems, and institutions.

There are several reasons for adopting a design for values approach:[86] (1) the avoidance of technology rejection due to a mismatch with the values of users or society, (2) the improvement of technologies/design by better embodying these values, and (3) the generation or stimulation of values in users and society through design.

Value-sensitive design[87] – and ethically aligned design[88] – have quickly become quite popular. It is important to note, however, that one should not focus on a single value. Value pluralism is important.[89] Moreover, the kinds of values chosen may depend on the functionality or purpose of a system. For example, considering findings in game theory and computational social science, one could design next-generation social media platforms in ways that promote cooperation, fairness, trust and truth.[90] Also note that a list of 12 values to support flourishing information societies has recently been proposed.[91]

Democracy by Design

Among the social engineers of the digital age, it seems democracy has often been framed as “outdated technology”.[92] Larry Page once said that Google wanted to carry out experiments, but could not do so, because laws were preventing this.[93] Later, in fact, Google experimented in Toronto, for example, but people did not like it much.[94] Peter Thiel, on the other hand, claimed there was a deadly race between politics and technology, and one had to make the world save for capitalism.[95] In other words, it seems that, among many leading tech entrepreneurs, there has been little love and respect for democracy (not to talk about their interference with democratic elections by trying to manipulate voters).

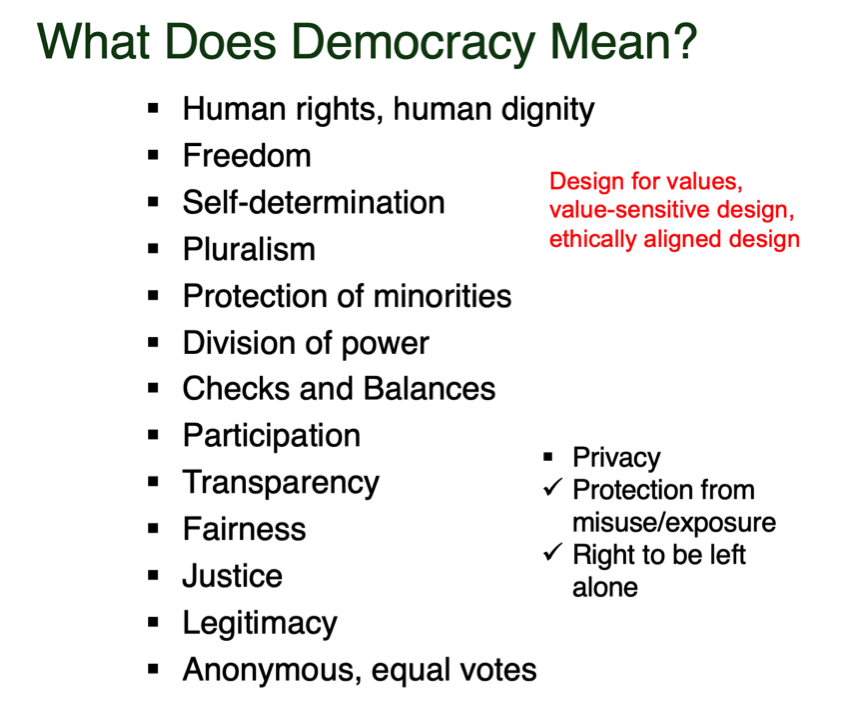

However, democratic institutions have not occurred by accident. They have been lessons from wars, revolutions and other trouble in human history. Can we really afford to ignore them? At least, we should have a broad public debate about this beforehand. Personally, I consider the following democratic values to be relevant: human dignity and human rights, (informational) self-determination, freedom (combined with accountability), pluralism, protection of minorities, division of power, checks and balances, participation, transparency, fairness, justice, legitimacy, anonymous and equal votes, and privacy (in the sense of protection from unfair exposure and the right to be left alone) (see Fig. 4). I am not sure it would benefit society to drop any of these values. Maybe there are even more values to consider. For example, I have been thinking about possible ways to upgrade democracy, by unleashing collective intelligence and constructive collective action, particularly on a local level.[96] New formats such as City Olympics or a Climate City Cup[97] could establish a new paradigm of innovation and change in a way that can engage all stakeholders bottom up, including civil society. In fact, networks of cities and the regions around them could become a third pillar of transformation besides nations and global corporations.

Fairness

I want to end this contribution with a discussion of the power of fairness. People have often asked: Is it good that everyone has one vote? Shouldn’t we have a system in which smart people have more weight? Shouldn’t we replace the democratic “one man one vote” principle by a “one dollar one vote” system? (Btw, we approximately have this today, because of voter manipulation by “big nudging”.) Experimental evidence about the “wisdom of crowds” surprisingly suggests that, giving people different weights, whatever the criteria are, does not improve results.[98] On the contrary, studies in collective intelligence show that largely unequal influence on a debate will reduce social intelligence.[99] Diversity of information sources, opinions, and solution approaches is what makes collective intelligence work.

In conclusion, it seems a fair system based on the principle of equality is the best. In fact, it can be mathematically shown for many complex model systems that they will evolve towards an optimal state if and only if interactions are symmetrical.[100] If symmetry is broken, all sorts of things can happen. However, one can surely say that a hierarchical system or one controlled by utilitarian principles will very unlikely achieve the best systemic performance. For example, replacing tree-like supply systems by a circular economy could potentially improve the quality of living for everyone while making our economy more sustainable.

Network Effects for Prosperity, Peace and Sustainability

In our increasingly networked world, we currently experience a transition from component-dominated to interaction-dominated systems. The resulting network effects can change everything. Combinatorial innovation (i.e. innovation ecosystems), which would be enabled by a platform for informational self-determination, could boost our economy, particularly the digital one. Supporting collective intelligence (which should be the foundational principle of digital democracies) would benefit society. And a multi-dimensional real-time feedback system (a novel, socio-ecological financial system, which we sometimes call Finance 4.0,[101] FIN4 or FIN+), would be able to promote a sustainable circular and sharing economy and, thereby, help improve the state of nature. In this way, harmony between humans and nature could best reached, and everyone could benefit.

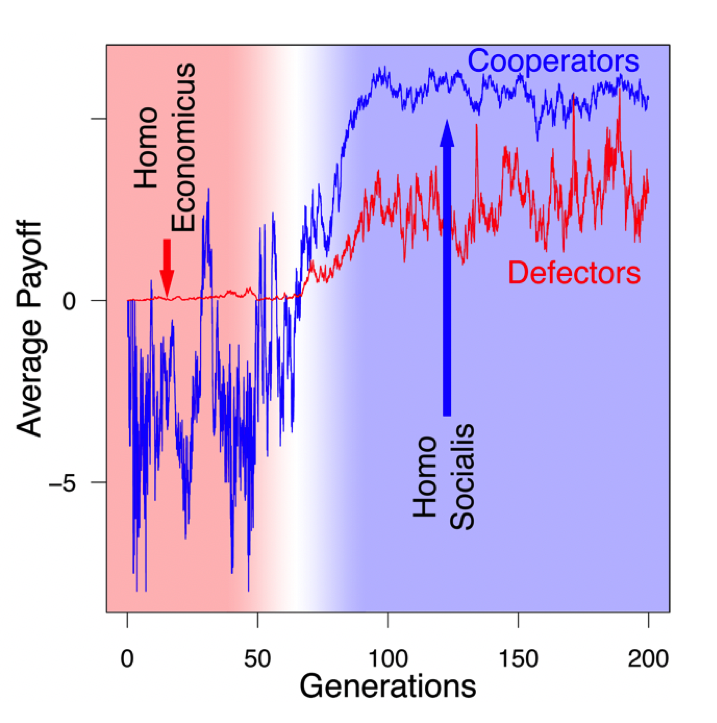

Finally, I would like to draw the attention to a computer simulation we have performed in order to understand the evolution of “homo economicus”, the utility-maximizing selfish man assumed in economics. To our surprise we found that, after dozens of generations, people would develop other-regarding preferences and cooperative behavior, if parents raise children in their close neighborhood, as humans do.[102] In fact, compared to other species, it is quite exceptional how many years children spend their lives with their parents. This makes a big difference, as it makes (most) people social.

There is a huge benefit in this. If people are friendly to each other, they can accomplish more. Benefits will increase. If it happens frequently enough that I help someone and this person helps someone else, then also somebody will help me. In such a way, “networked thinking” will emerge and with it a powerful support network (so-called “social capital”). Such cooperation will cause much higher average success (see Fig. 5).

Interestingly, such networked thinking and benevolent behavior can now be supported with digital technologies.[103] I believe, however, technological telepathy[104] is not needed for this – it might have even negative effects. But altogether, it is entirely in our hands to create a better world. “Love your neighbor as yourself” (i.e. be fair and give the concerns of others [and nature] as much weight as yours) is the simple success principle, which will eventually be able to create prosperity and peace. We could have known this before, but now we have the scientific evidence for it…

Figure 6: Results of computer simulations showing the evolution of “homo socialis” with other-regarding preferences and “networked thinking”, starting with a selfish type of human, called “homo economicus”. The transition is expected to take dozens of generations, but it may just be happening…

All rights reserved Dirk Helbing 2020

_____

Dirk Helbing is Full Professor of Computational Social Science at ETH Zurich and affiliate professor at ETH’s Computer Science Department and the Faculty of Technology, Policy and Management at TU Delft, where he is coordinating the PhD school “Engineering Social Technologies” for a Responsible Digital Future. He is also External Faculty of the Complexity Science Hub Vienna. Before, he was Full Professor of Sociology at ETH Zurich and Managing Director of the Institute for Transport & Economics at Dresden University of Technology. Helbing is also an elected member of the German Academy of Sciences “Leopoldina” and of the World Academy of Arts and Science. In 2014, he received an honorary PhD at TU Delft. He is a member of the Swiss Federal Committee on the Future of Data Processing and Data Security and of committees on the “Digital Society” and on “Digitization and Democracy” of the German Academies of Science.

[1] https://www.spiegel.de/wissenschaft/technik/kuenstliche-intelligenz-gott-braucht-keine-lehrmeister-kolumne-a-1175130.html

[2] https://www.businessinsider.com/anthony-levandowski-religion-worships-ai-god-report-2017-9

https://www.wired.com/story/anthony-levandowski-artificial-intelligence-religion/

[3] https://www.spektrum.de/thema/das-digital-manifest/1375924, in English: https://www.scientificamerican.com/article/will-democracy-survive-big-data-and-artificial-intelligence/

[4] https://www.youtube.com/watch?v=C74amJRp730

[5] https://www.theverge.com/2014/9/3/6102377/google-calico-cure-death-1-5-billion-research-abbvie

[6] https://arxiv.org/abs/1304.3271, https://www.theglobalist.com/google-artificial-intelligence-big-data-technology-future/, https://www.youtube.com/watch?v=BgoU3koKmTg

[7] https://en.wikipedia.org/wiki/Technological_singularity

[8] https://www.amazon.com/Our-Final-Invention-Artificial-Intelligence/dp/1250058783/

[9] https://www.bbc.com/news/technology-30290540

[10] https://www.theguardian.com/technology/2014/oct/27/elon-musk-artificial-intelligence-ai-biggest-existential-threat

[11] https://www.bbc.com/news/31047780

[12] https://www.computerworld.com/article/2901679/steve-wozniak-on-ai-will-we-be-pets-or-mere-ants-to-be-squashed-our-robot-overlords.html

[13] https://www.faz.net/aktuell/feuilleton/debatten/kuenstliche-intelligenz-maschinen-ueberwinden-die-menschheit-15309705.html, https://nzzas.nzz.ch/gesellschaft/juergen-schmidhuber-geschichte-wird-nicht-mehr-von-menschen-dominiert-ld.1322558

[14] https://www.youtube.com/watch?v=ntMy2wk4aFg

[15] https://www.amazon.com/Limits-Growth-Donella-H-Meadows/dp/0451136950/

[16] https://futureoflife.org/open-letter-autonomous-weapons/

[17] https://www.nzz.ch/meinung/autonome-intelligenz-ist-nicht-nur-in-kriegsrobotern-riskant-ld.1351011

[18] https://www.amazon.com/Second-Machine-Age-Prosperity-Technologies/dp/0393350649/

[19] https://www.wired.com/2016/10/obama-aims-rewrite-social-contract-age-ai/

[20] https://www.theguardian.com/technology/2019/jul/17/elon-musk-neuralink-brain-implants-mind-reading-artificial-intelligence

[21] https://www.nzz.ch/zuerich/mensch-oder-maschine-interview-mit-neuropsychologe-lutz-jaencke-ld.1502927

[22] https://www.amazon.com/Apocalyptic-AI-Robotics-Artificial-Intelligence/dp/0199964009/

[23] https://singularityhub.com/2018/02/15/the-power-to-upgrade-our-biology-and-the-ethics-of-human-enhancement/

[24] https://en.wikipedia.org/wiki/Transhumanism

[25] https://www.theguardian.com/technology/2018/jul/06/artificial-intelligence-ai-humans-bots-tech-companies

[26] https://en.wikipedia.org/wiki/Allegory_of_the_Cave

[27] https://arxiv.org/abs/1712.01826,

[28] https://www.wired.com/2008/06/pb-theory/

[29] https://www.spektrum.de/thema/das-digital-manifest/1375924

[30] https://www.sueddeutsche.de/digital/fluggastdaten-bka-falschtreffer-1.4419760

[31] https://www.amazon.com/Signal-Noise-Many-Predictions-Fail-but/dp/0143125087

[32] https://www.amazon.com/Spurious-Correlations-Tyler-Vigen/dp/0316339431/

[33] https://www.amazon.com/Black-Box-Society-Algorithms-Information/dp/0674970845/, https://www.technologyreview.com/s/604087/the-dark-secret-at-the-heart-of-ai/

[34] https://emerj.com/ai-future-outlook/nsa-surveillance-and-sentient-world-simulation-exploiting-privacy-to-predict-the-future/

[35] https://www.theregister.co.uk/2007/06/23/sentient_worlds/

[36] https://www.crystalknows.com

[37] https://theintercept.com/2014/02/24/jtrig-manipulation/

[38] https://www.amazon.com/Persuasion-Code-Neuromarketing-Persuade-Anywhere-ebook/dp/B07H9GZMDH/

[39] https://www.theguardian.com/news/2018/may/06/cambridge-analytica-how-turn-clicks-into-votes-christopher-wylie

[40] https://www.extremetech.com/extreme/245014-meet-sneaky-facebook-powered-propaganda-ai-might-just-know-better-know

[41] https://www.theguardian.com/uk-news/2018/sep/13/gchq-data-collection-violated-human-rights-strasbourg-court-rules

[42] https://www.pcwelt.de/ratgeber/Superscoring-Wie-wertvoll-sind-Sie-fuer-die-Gesellschaft-10633488.html

[43] https://www.fastcompany.com/90394048/uh-oh-silicon-valley-is-building-a-chinese-style-social-credit-system

[44] https://www.superscoring.de

[45] https://www.wired.co.uk/article/chinese-government-social-credit-score-privacy-invasion

[46] https://theintercept.com/2015/09/25/gchq-radio-porn-spies-track-web-users-online-identities/

[47] https://www.theguardian.com/global-development/live/2015/sep/25/un-sustainable-development-summit-2015-goals-sdgs-united-nations-general-assembly-70th-session-new-york-live

[48] https://www.businessinsider.com/china-social-credit-system-punishments-and-rewards-explained-2018-4

[49] https://www.spektrum.de/thema/das-digital-manifest/1375924

[50] https://www.amazon.de/Technologischer-Totalitarismus-Eine-Debatte-suhrkamp/dp/3518074342

[51] https://www.scientificamerican.com/article/will-democracy-survive-big-data-and-artificial-intelligence/

[52] https://www.pcwelt.de/ratgeber/Superscoring-Wie-wertvoll-sind-Sie-fuer-die-Gesellschaft-10633488.html

[53] See Appendix A in https://link.springer.com/article/10.1140/epjst/e2011-01403-6, https://papers.ssrn.com/sol3/papers.cfm?abstract_id=1753796

[54] https://m.faz.net/aktuell/feuilleton/medien/politik-der-algorithmen-google-will-den-staat-neu-programmieren-13852715/internet-googles-vision-von-der-totalen-vernetzung-13132960.html

[55] https://www.psychologytoday.com/intl/blog/bouncing-back/201106/the-no-1-contributor-happiness, https://www.forbes.com/sites/georgebradt/2015/05/27/the-secret-of-happiness-revealed-by-harvard-study/

[56] https://www.weforum.org/agenda/2018/11/algorithms-court-criminals-jail-time-fair/, https://www.technologyreview.com/s/612775/algorithms-criminal-justice-ai/

[57] https://www.zdf.de/nachrichten/heute/software-soll-ueber-leben-und-tod-entscheiden-100.html

[58] https://link.springer.com/chapter/10.1007/978-3-319-90869-4_5, https://papers.ssrn.com/sol3/papers.cfm?abstract_id=3110582

[59] https://www.bmvi.de/SharedDocs/DE/Publikationen/DG/bericht-der-ethik-kommission.pdf

[60] https://www.wma.net/policies-post/wma-declaration-of-geneva/

[61] https://www.nature.com/articles/s41586-018-0637-6

[62] https://www.swisstransplant.org/de/organspende-transplantation/rund-umsspenden/wer-erhaelt-die-spende/

[63] https://ict4peace.org/activities/artificial-intelligence-lethal-autonomous-weapons-systems-and-peace-time-threats/

[64] https://www.amazon.com/Learning-Die-Anthropocene-Reflections-Civilization/dp/0872866696/

[65] https://www.amazon.com/Limits-Growth-Donella-H-Meadows/dp/193149858X/

[66] http://christelleboereboom.tk/download/eXqX0Ls4wGQC-een-computermodel-voor-het-ondersteunen-van-euthanasiebeslissingen, https://books.google.ch/books/about/Een_computermodel_voor_het_ondersteunen.html

[67] https://www.amazon.com/Nazi-Connection-Eugenics-American-Socialism/dp/0195149785/, https://en.wikipedia.org/wiki/William_Shockley, https://www.theguardian.com/us-news/2019/aug/18/private-jets-parties-and-eugenics-jeffrey-epsteins-bizarre-world-of-scientists

[68] https://www.nzz.ch/schweiz/seidenstrasse-bundesrat-wollte-vereinbarung-zu-menschenrechten-ld.1479438

[69] https://www.handelsblatt.com/politik/international/menschenrechtsausschuss-china-verweigert-einreise-von-delegationen-des-deutschen-bundestages/24868826.html

[70] https://www.bbc.com/news/election-2017-40181444, https://www.tagesanzeiger.ch/schweiz/standard/Die-SVP-ist-bereit-die-Menschenrechte-zu-opfern/story/12731796, https://www.spiegel.de/politik/ausland/tuerkei-recep-tayyip-erdogan-nennt-hitler-deutschland-als-beispiel-fuer-praesidialsystem-a-1070162.html, https://www.spiegel.de/politik/ausland/japan-vizepremier-taro-aso-nennt-adolf-hitlers-absichten-richtig-a-1165232.html

[71] https://ia800202.us.archive.org/33/items/TheFirstGlobalRevolution/TheFirstGlobalRevolution.pdf

[72] https://theconnectivist.wordpress.com/2019/08/06/log-of-the-rightwing-power-grab-of-society/

[73] https://www.theguardian.com/business/2016/apr/13/climate-change-oil-industry-environment-warning-1968

[74] http://www.geraldbarney.com/G2000Page.html; the report was published in 1980.

[75] https://en.wikipedia.org/wiki/World_energy_consumption

[76] https://www.un.org/en/universal-declaration-human-rights/

[77] https://www.psychologytoday.com/intl/blog/bouncing-back/201106/the-no-1-contributor-happiness, https://www.forbes.com/sites/georgebradt/2015/05/27/the-secret-of-happiness-revealed-by-harvard-study/

[78] https://papers.ssrn.com/sol3/papers.cfm?abstract_id=1804189

[79] https://www.amazon.com/First-Global-Revolution-Report/dp/067171094X/

[80] https://www.edge.org/response-detail/26795

[81] Build Digital Democracy, Nature 527, 33-34 (2015): http://bit.ly/1WCSzi4, Why We Need Democracy 2.0 and Capitalism 2.0 to Survive: http://bit.ly/1O5axWZ

[82] https://de.wikipedia.org/wiki/Menschenwürde, https://en.wikipedia.org/wiki/Dignity

[83] T. Asikis, E. Pournaras, J. Klinglmayer, and D. Helbing, Smart product ratings to support self-determined sustainable consumption, preprint (2019).

[84] https://www.japantimes.co.jp/opinion/2018/04/30/commentary/world-commentary/stop-surveillance-capitalism/, https://www.morgenpost.de/web-wissen/web-technik/article213868509/Facebook-Skandal-Experte-raet-zu-digitalem-Datenassistenten.html

[85] https://www.weforum.org/agenda/2018/03/engineering-a-more-responsible-digital-future

[86] http://designforvalues.tudelft.nl, https://www.tudelft.nl/en/tpm/research/projects/engineering-social-technologies-for-a-responsible-digital-future/

[87] http://designforvalues.tudelft.nl

[88] https://standards.ieee.org/content/dam/ieee-standards/standards/web/documents/other/ead_v2.pdf

[89] https://www.youtube.com/watch?v=sZYH53j5dFc

[90] https://www.amazon.com/Automation-Society-Next-Survive-Revolution/dp/1518835414

[91] https://link.springer.com/chapter/10.1007/978-3-319-90869-4_11 , http://futurict.blogspot.com/2016/04/why-we-need-democracy-20-and-capitalism.html

[92] Hencken, Randolph. 2014. In: Mikrogesellschaften. Hat die Demokratie ausgedient? Capriccio. Video, veröffentlicht am 15.5.2014. Autor: Joachim Gaertner. München: Bayerischer Rundfunk.

[93] https://www.businessinsider.com/google-ceo-larry-page-wants-a-place-for-experiments-2013-5

[94] https://www.theguardian.com/cities/2019/jun/06/toronto-smart-city-google-project-privacy-concerns

[95] https://www.businessinsider.com/peter-thiel-is-trying-to-save-the-world-2016-12

[96] How to Make Democracy Work in the Digital Age: https://www.researchgate.net/publication/305571691

[97] http://climatecitycup.org, https://www.youtube.com/watch?v=lX4OQ1mDEA4

[98] https://www.amazon.com/Difference-Diversity-Creates-Schools-Societies/dp/0691138540/

[99] https://science.sciencemag.org/content/330/6004/686.short

[100] https://iopscience.iop.org/article/10.1088/1367-2630/1/1/313/meta, https://link.springer.com/article/10.14441/eier.D2013002

https://www.researchcollection.ethz.ch/bitstream/handle/20.500.11850/286469/D.3.2ReportonFinance4.0Concept%28M12report%29-PUBLICv2.pdf

FuturICT 2.0: Towards a sustainable digital society with a socio-ecological finance system (FIN4)

https://adobe.ly/3amwsFe

The FIN4 project: Towards a socio-ecological finance system

https://bit.ly/2VJEqEE

https://www.amazon.com/Quantitative-Sociodynamics-Stochastic-Interaction-Processes/dp/3642115454/

[101] https://link.springer.com/article/10.14441/eier.D2013002

[102] https://www.nature.com/articles/srep01480

[103] https://patents.google.com/patent/US20160350685A1/en, https://link.springer.com/chapter/10.1007/978-3-319-90869-4_17

[104] https://www.forbes.com/sites/forbestechcouncil/2018/12/04/is-tech-boosted-telepathy-on-its-way-nine-tech-experts-weigh-in/, https://www.singularityweblog.com/telepathic-technology-is-here-but-are-we-ready/